If you’re searching for answers because Google won’t index your website, you’re not alone.

Indexing issues are one of the most common technical SEO problems we see — especially with new websites, recent redesigns, or platform migrations.

The important thing to understand is this:

If Google isn’t indexing your website, there is always a reason.

The solution starts with identifying which type of issue you’re dealing with.

Let’s walk through the most common causes and how to fix them.

First: Check How Long Indexing Usually Takes

Before assuming something is broken, confirm whether the delay is normal.

For new websites, indexing can take days to weeks depending on technical setup and domain trust. If you’re unsure about expected timelines, review how long indexing usually takes before investigating deeper.

If your site has been live for more than 3–4 weeks with zero indexed pages, it’s time to troubleshoot.

1. Noindex Tags Blocking Your Pages

One of the most common causes of indexing failure is the “noindex” directive.

This can appear:

- In the page’s HTML meta tag

- As an HTTP header

- Via CMS SEO settings

- Through developer staging configurations

If a page contains:

<meta name="robots" content="noindex">Google will not index it.

This frequently happens when:

- A staging site is pushed live

- WordPress “Discourage search engines” remains enabled

- A developer temporarily blocked indexing

Fix:

Remove the noindex directive and request indexing through Search Console.

2. Robots.txt Is Blocking Crawlers

Your robots.txt file controls crawler access.

If it contains:

Disallow: /

Google cannot crawl your site at all.

Sometimes developers restrict crawling during development and forget to remove it before launch.

Fix:

Review robots.txt and remove blocking rules. Then upload your sitemap correctly to prompt recrawling.

3. Sitemap Errors or Misconfiguration

If your sitemap:

- Contains broken URLs

- Lists redirected pages

- Includes noindex URLs

- Isn’t submitted

- Returns server errors

Google may struggle to process your site properly.

A sitemap should contain only live, canonical URLs.

Fix:

Audit your XML sitemap structure and resubmit it via Search Console.

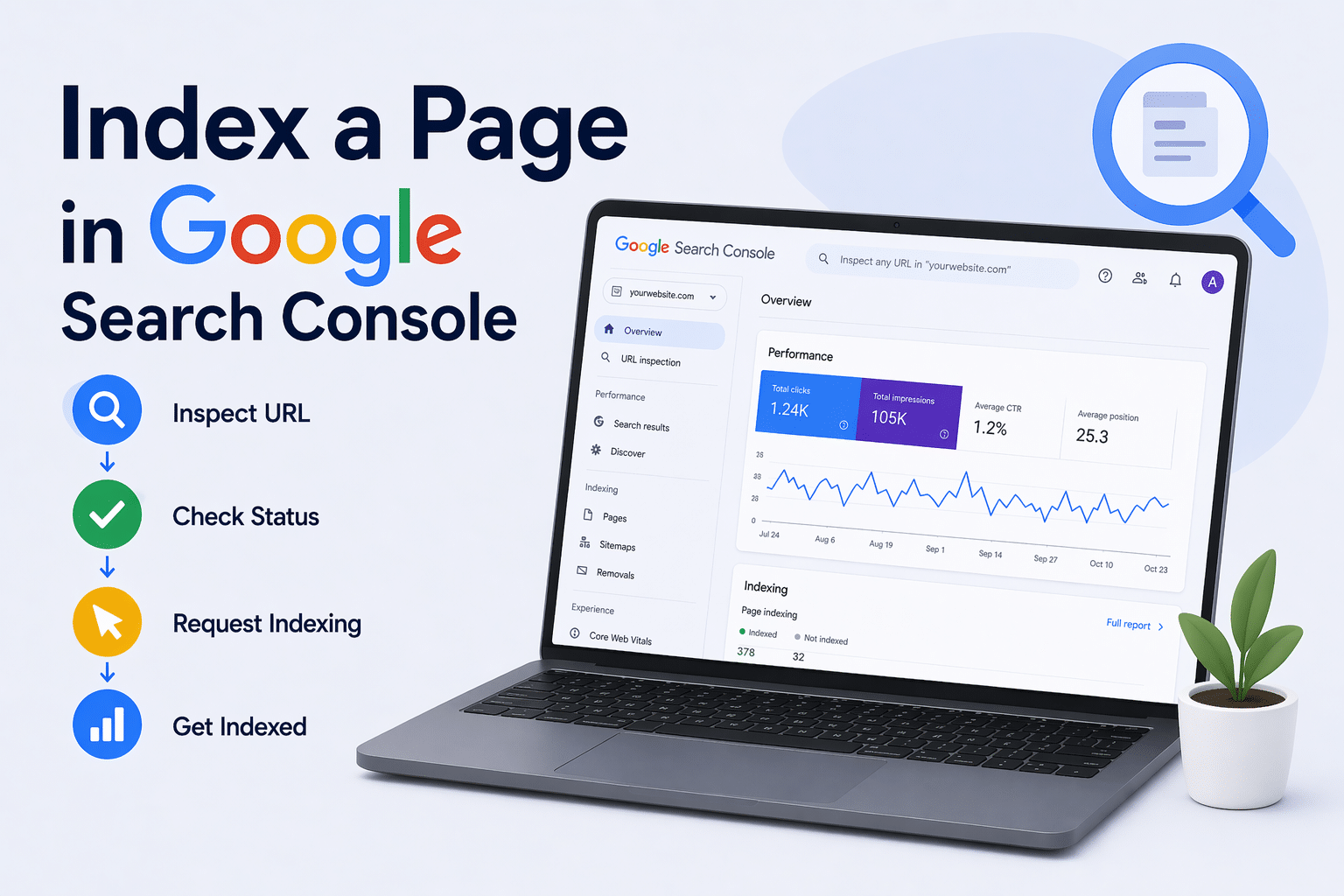

4. Crawled – Currently Not Indexed

This status appears frequently in Search Console.

It means Google has crawled the page but chosen not to index it.

Common reasons include:

- Thin content

- Duplicate content

- Weak internal linking

- Low perceived value

Google evaluates content quality before adding it to its index.

Fix:

Improve content depth, clarity, and internal linking. Then request indexing through Search Console.

5. Discovered – Currently Not Indexed

This status indicates Google knows the URL exists but hasn’t crawled it yet.

Often caused by:

- Large websites

- Poor crawl budget allocation

- Weak site authority

- Inadequate internal linking

Fix:

Strengthen internal links to the page and ensure your sitemap reflects priority URLs.

6. Duplicate Content or Canonical Issues

If your site has multiple versions of similar content, Google may index only one and exclude the rest.

This happens with:

- HTTP vs HTTPS versions

- www vs non-www versions

- Parameter URLs

- Blog tag/category duplication

Fix:

Ensure canonical tags are correct and consistent.

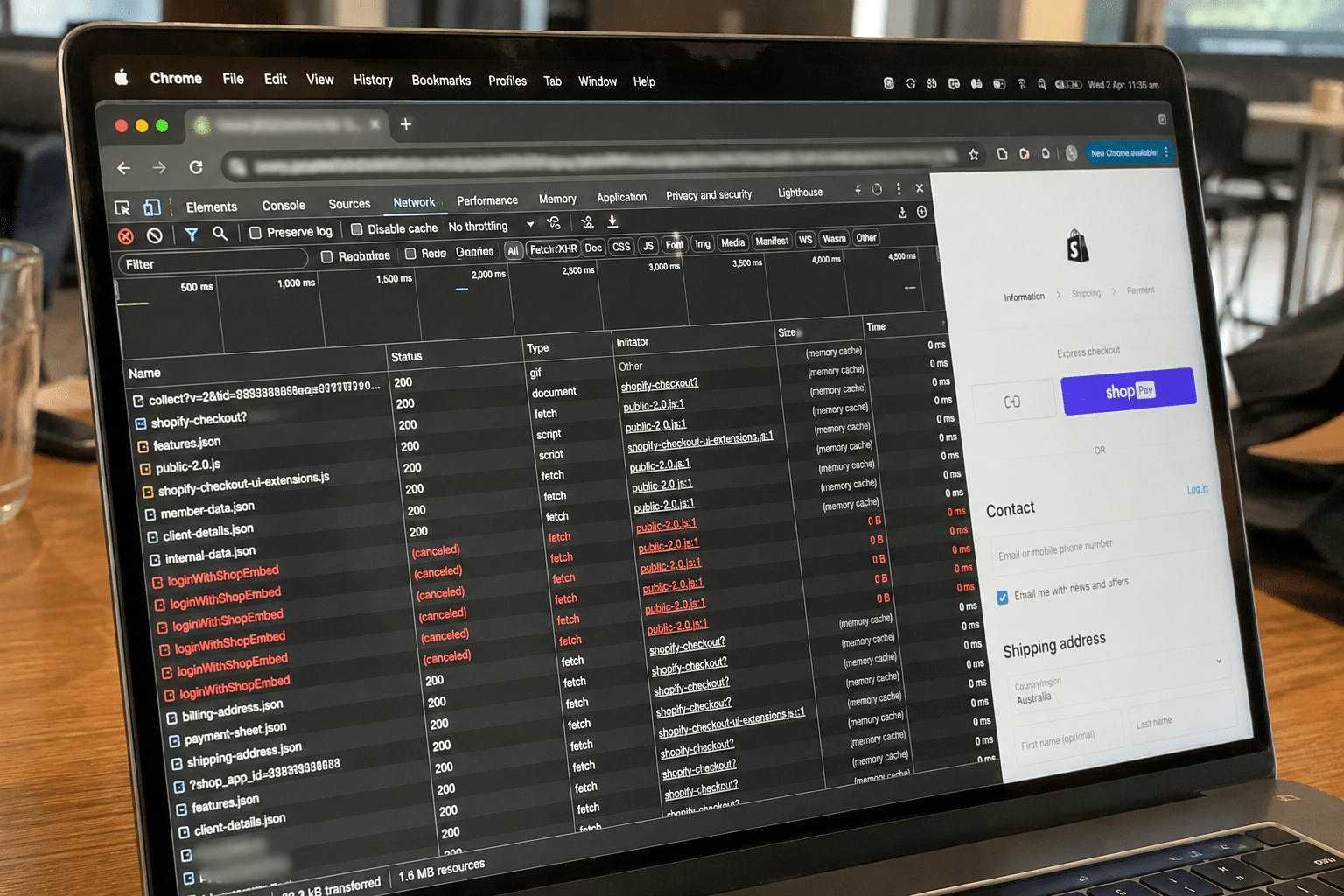

7. Hosting or Server Problems

Google cannot index pages it cannot reliably access.

Common hosting-related issues include:

- Frequent downtime

- Slow server response times

- Shared hosting overload

- Firewall blocks

If Googlebot encounters repeated server errors (5xx), it reduces crawl frequency.

Because hosting, infrastructure, and SEO are interconnected, indexing problems often stem from technical environments rather than content alone.

8. Manual Actions or Security Issues

In rare cases, indexing may be impacted by:

- Manual penalties

- Malware

- Hacked content

- Spam signals

These issues appear in the “Security & Manual Actions” section of Search Console.

Fix:

Resolve the flagged issue and submit reconsideration if necessary.

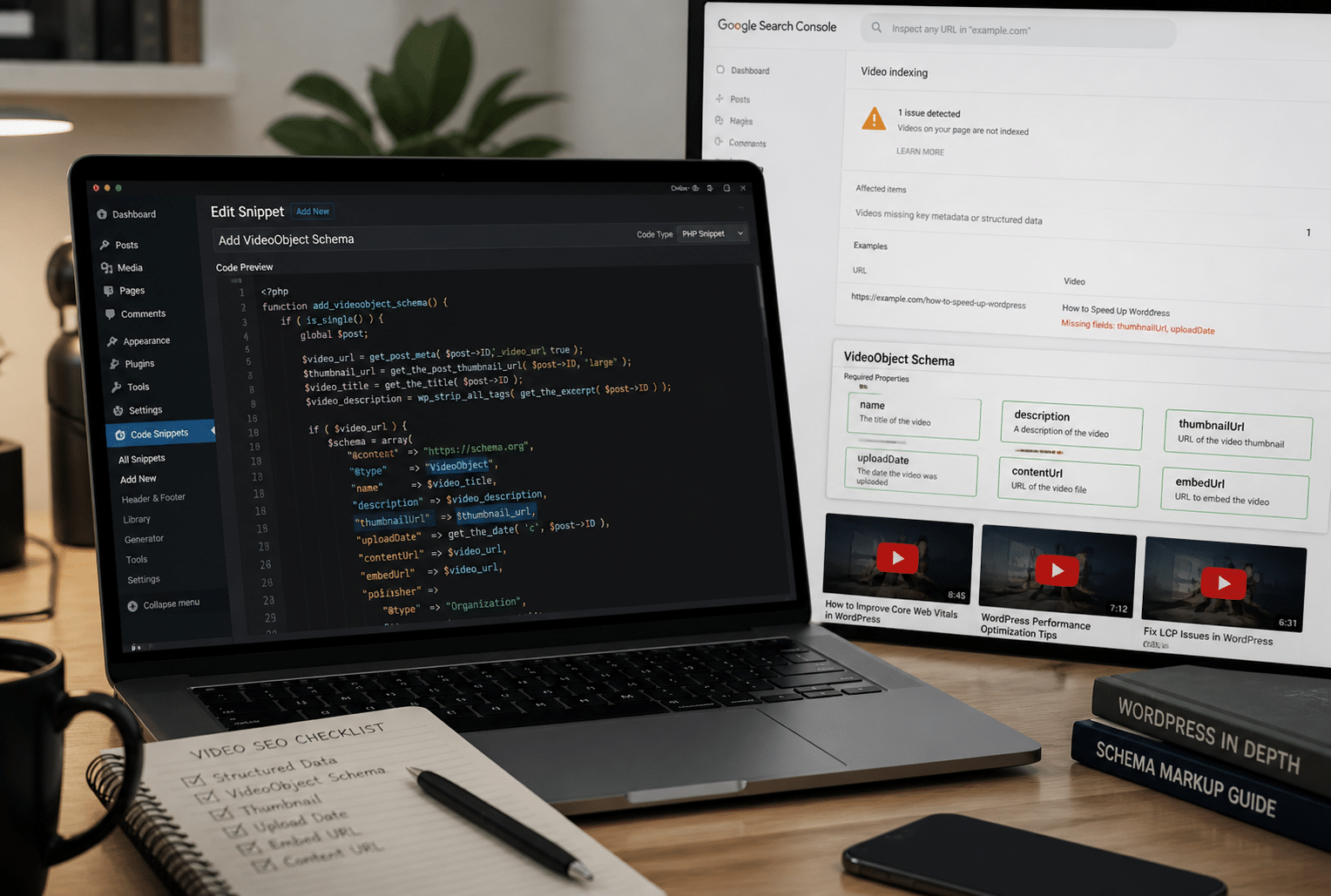

How to Diagnose Indexing Issues Properly

Instead of guessing, follow a structured approach:

- Check Search Console coverage report

- Inspect affected URLs

- Review robots.txt

- Confirm noindex tags

- Audit sitemap

- Evaluate content quality

- Check server response codes

If after completing these steps your pages still aren’t appearing, deeper technical investigation is required.

Why Indexing Problems Are Often Layered

Many businesses assume indexing is a single issue.

In reality, it’s often layered:

- Weak content + no sitemap

- Noindex tag + crawl delay

- Hosting instability + thin content

That’s why repeated manual requests rarely solve the underlying cause.

Indexing is a result of technical clarity, content value, and crawl accessibility working together.

When to Seek Technical SEO Support

If you’ve:

- Removed noindex tags

- Fixed robots.txt

- Updated your sitemap

- Improved content

- Requested indexing

And your site still isn’t appearing — it’s time for structured technical review.

Because Arvo integrates website development, AWS-backed hosting, and technical SEO, we regularly diagnose indexing failures that originate from misaligned CMS settings, infrastructure limitations, or canonical misconfiguration.

Experiencing indexing problems? Our Brisbane-based SEO specialists can diagnose and resolve technical issues as part of our broader digital services in Brisbane and Australia-wide.

Frequently Asked Questions

Why is Google not indexing my site?

Common reasons include noindex tags, robots.txt blocks, duplicate content, thin content, crawl budget issues, or hosting instability.

How do I fix noindex issues?

Remove the noindex directive from your page or CMS settings, then request indexing in Search Console.

How do I check robots.txt?

Visit yourdomain.com/robots.txt and review whether important pages are disallowed.

Can hosting affect indexing?

Yes. Slow or unstable servers reduce crawl efficiency and can prevent indexing entirely.